Nowadays, it is unthinkable for any company that services such as email or real-time data analysis (BIG DATA) are not permanently available.

‘Here and now’ is the philosophy of the 21st century. Nobody has the time to try it later and there is no second chance.

In this context, having your services available on a permanent basis, what is technically known as high availability, or HA, is key.

The end user wants requests to be met as soon as they are made, and he does not accommodate failure. We can’t let him down.

The challenge lies in the fact that errors, unfortunately, are unavoidable. No matter how robust the system, a machine might stop working or a service might not respond, and as a result requests might not be handled correctly.

Even if it all goes well, whenever there’s a need to update the system, to introduce improvements, new features, or simply to fix bugs, system availability will be compromised.

For this reason, having a high availability system, which must work and be available 24x7, is nowadays an indispensable condition.

But what exactly are we talking about?

A system’s High Availability is based on 3 fundamental concepts: Redundancy, Monitoring and Recovery capacity.

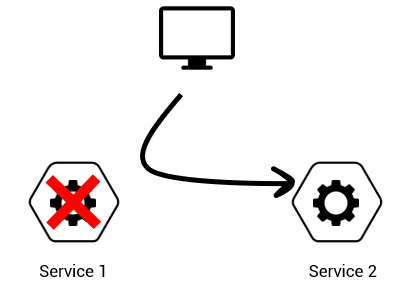

Achieving high availability is intrinsically related to Redundancy, in such a way that if a machine stops working, another one is available to work in its place.

If we have a cluster where the services of the different nodes work in a coordinated way, if one of the nodes fails, others can come out to the rescue and carry out the required work. In order to guarantee high availability in the cluster, the software must be redundant, and the hardware should be redundant as well.

Just as important as redundancy, the ability to detect a failure guarantees the system’s capacity to monitor and know when a service is not working properly, in order to distribute its workload through other available services.

Finally, the recovery capacity of the service is essential, allowing the system to restart at a point prior to the failure and restore its high availability.

The non-availability of a system may imply loss of information that, depending on the function of the service, could be vital for the operations of a company. For this reason, nowadays, it is essential to shield critical services through the adoption of a persistence solution.

Our solution in Mule

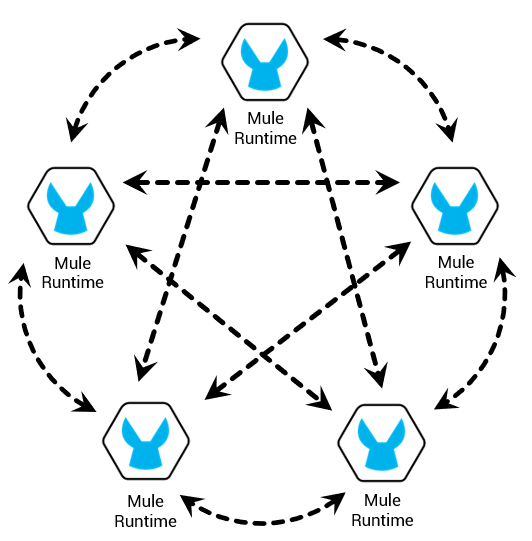

As experts in MuleSoft and Apache Ignite, we set out to merge the best of both technologies to achieve a high availability system based on Mule, enriched with Apache Ignite features.

The challenge: to provide a community version of Mule with high availability, horizontal scalability, distributed processing, fault tolerance, and persistence.

For this we’ve developed an extension of the Mule core that works in a transparent way - once installed and configured you will be able to continue working normally - but enjoying clustering as an extra tool.

This Mule Core extension is made up of a series of Factories, alternative to those provided by Mule by default, to manage its VM queues, Object Store and Locks.

Basically, the functionality of this core extension is to convert VM Queues, Object Stores and Locks into distributed cluster structures to assign workload, store data and coordinate processes.

With this approach, we manage that both existing and new developments work in clusters in a transparent way.

Moreover, as it does not affect the development of your projects, you have the ability to migrate them to the Enterprise version without losing an iota of your work.

Now you will be able to equip your version of Mule with:

- High Availability with no limitation in the number of Mule instances

- Horizontal scalability

- Distributed persistence of information

- Fault tolerance

The Mule Core extension, though powerful, is limited to the Mule Core API. To overcome its limitations, we have developed Apache Ignite & GridGain Connectors that substantially enriche the distributed information processing capabilities. We will delve into their benefits in an upcoming blog post.